In this post, we are going to discuss about two important performance metrics for Azure Blob Storage, latency and bandwidth.

Latency in the context of Azure blob storage is the amount of time an application must wait for an input/output request to be completed.

Before we discuss Azure storage latency metrics, we must understand request rate. Request rate is measured in Input/output operations per second (IOPS). Request rate is calculated by dividing the time it takes to complete one request by number of requests per second. E.g. Let us assume that a request from a single thread application with one outstanding read/write operation takes 10 ms to complete.

Request Rate = 1sec/10ms = 1000ms/10ms = 100 IOPS

This means the outstanding read/write would achieve a request rate of 100 IOPS.

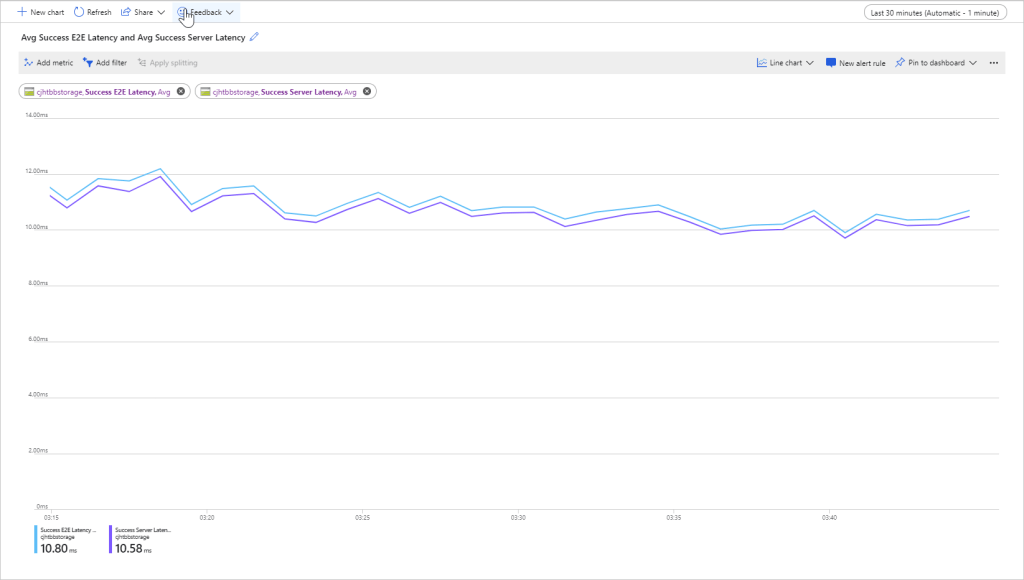

There are two types of latency metrics available on the Azure Portal for monitoring the performance of the block Blob storage:

- End to End Latency: The time interval between when first request packet is received to the time when client acknowledgement of the response to the last packet is received.

- Server Latency: Time interval between when the last request packet is received to the first packet of the response returned from Azure Storage.

Azure Storage bandwidth is a measure of the throughput of the data transfer. It can be calculated as follows:

Storage Bandwidth (MiB per second) = Request Rate (IOPS) x Request Size (KiB or MiB)where, 1 KiB = 1024 bytes and 1 MiB = 1024 KiB

e.g. If Request rate is 128 IOPS and Request size is 512 KiB then,

Storage Bandwidth = 128 x 512 = 65536 KiB per second or 64 MiB per second

Reference: https://docs.microsoft.com/en-us/azure/storage/blobs/storage-blobs-latency