In the previous post about Delete activity, we discussed briefly about the staging area. In a Data Warehouse load scenario, we may choose to stage the data in a temporary storage area (such as Azure Blob storage or ADLS), instead of sending the data directly to the sink. Also, there could be other reasons such as IT/network security policies preventing direct connection between the on-prem sources and the sinks on the cloud (e.g., Synapse Analytics, which required port 1433 to be open).

To cater to these requirements, Azure Data Factory comes equipped with a feature that automates this two-stage process, known as, Staged Copy. When the staged copy feature is activated, Data Factory will first copy the data from source to the staging data store (Azure Blob or ADLS Gen2), before finally moving the data from the staging data store to the sink.

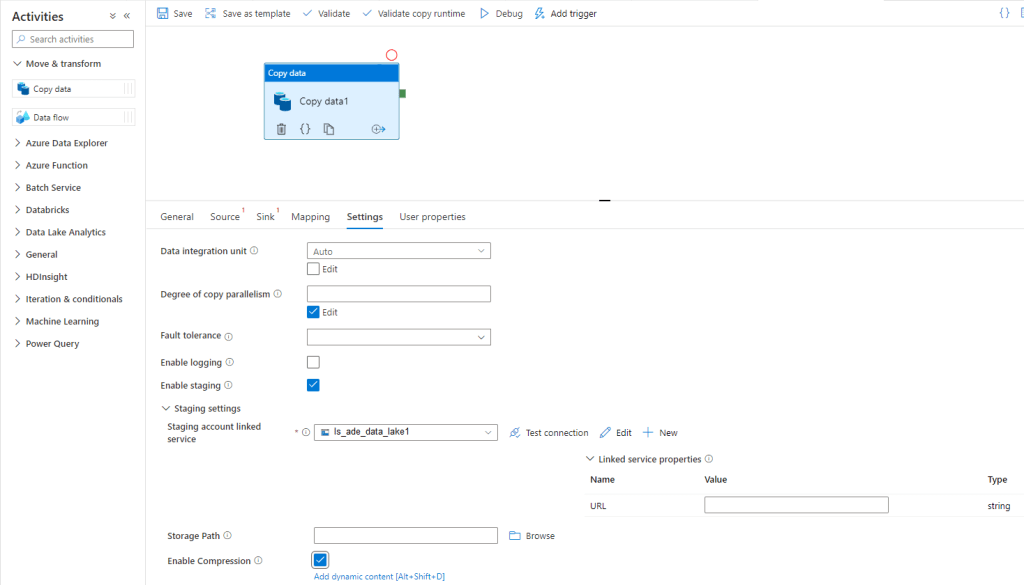

To enable the staged copy mode, go to the settings tab after selecting the Copy Data Activity, and select the Enable staging checkbox, as shown in the screenshot below:

Selecting the checkbox will bring up a new selection box where we can specify the Linked Service for the Staging data store that we would like to use.

Once we have selected the linked service, we can specify the Storage path. Another important setting is the option to Enable Compression. Selecting this checkbox controls whether the data is compressed before being loaded into the staging data store. The data is subsequently decompressed before being loaded from the staging data store into the sink.

There is currently a limitation with regards to copying on-prem data sources that are hosted on different Self-hosted Integration Runtimes. Copying data from different Self-hosted IRs, is not currently supported, either in normal as well as staged copy modes.

Reference: https://docs.microsoft.com/en-us/azure/data-factory/copy-activity-performance-features#staged-copy

Hey Ash! Just reading this because we were having issues sourcing data from your Synapse with a data flow – chat next week and I’ll give you details 😉

LikeLiked by 1 person

Hi Todd, great to see your comment on here! Hope you find some of these posts useful.

PS: I am currently on vacation till new years’ but feel free to send me a message on Teams.

LikeLike