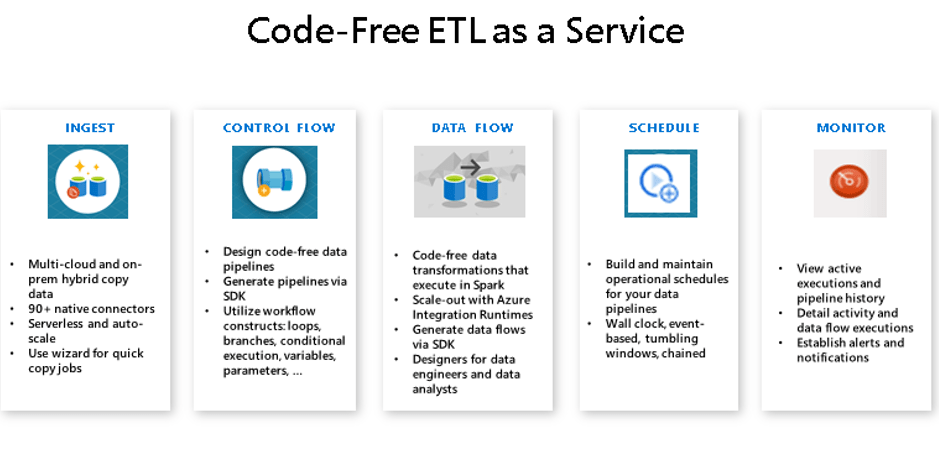

Azure Data Factory is a code-free ETL and data integration service that enables users to create data pipelines and workflows to ingest, transform and transport data at scale. Azure Data Factory can also be used to automate various maintenance tasks such as partitioning. Azure Data Factory is based on the on-prem ETL tool that comes bundled with SQL Server Enterprise Edition, SQL Server Integration Services (SSIS).

Let’s have a look at some of the components and concepts related to Azure Data Factory:

Pipeline: A pipeline is a logical grouping of activities to perform a specific task. In the most basic form, a pipeline would be a dataflow to move data from a source to a target.

Activity: An activity is a processing step within a pipeline. E.g. copy activity to copy data from a source file to a target table in a data store. Activities can be categorized into three types data movement activity, data transformation activity and control activity.

Linked Service: This is the equivalent of a connection string in SSIS. It stores the information that is required to connect to an external resource. A linked service can be used to represent either a data store or a compute resource in Azure.

Dataset: Datasets in Azure Data Factory represent the structure of the data. They are just a reference to the data that the users want to use in the activity usually as an input or an output.

Triggers: As the name suggests, a trigger invokes an execution of the pipeline. It determines when a pipeline execution needs to be kicked off.

Control Flow: This refers to the orchestration of the activities within a pipeline. This can include conditional branching, outcome dependent data partitioning, passing parameters etc. An important feature of control flows is looping containers e.g. for each iterator

Azure Data Factory provides a UI where developers can access all the above features with no coding required. Besides, it is possible to interact with Azure Data Factory programmatically using Azure PowerShell, .NET, Python and REST API.

Reference: https://docs.microsoft.com/en-us/azure/data-factory/introduction

4 thoughts on “Azure Data Factory”