Azure Data Factory helps users orchestrate and automate various activities within the Azure environment. One of the primary use cases for Azure Data Factory Pipelines is to build Data Flows to integrate, transform and move data. Once the Data Flows have been created, the next step is scheduling. This can be achieved through Data Factory Pipeline execution (can be either a trigger execution or manual execution).

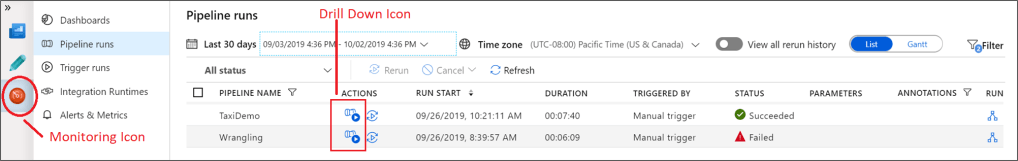

There is also an option for a single-run execution to test the Pipeline. During the test run, developers can monitor the Pipeline and all the activities contained within the Pipeline (including Data Flows). To view the monitor screen, click the monitor icon on the left-hand pane. This will show the monitoring screen at the Pipeline level. There is the option to drill down further, to view the performance of the individual Activities within the Pipeline, by clicking on the highlighted icon, as shown in the image below:

The drill-down screen shows some statistics related to the execution of the individual Activity. It is important to note that the Run ID and execution statistics at the Activity level are different from the corresponding values at the Pipeline level. This can be helpful with tracking the performance metrics over a period, to help resolve any future performance issues.

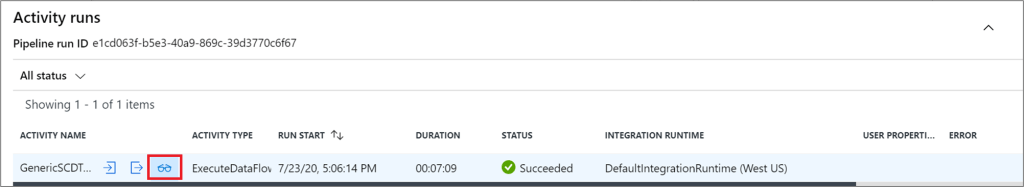

There is an option to further drill-down and view more detailed information about the execution of the single Activity. This can be viewed by clicking on the “glasses” icon, as shown in the image below:

Performance Monitoring is closely linked with performance optimization. To help with the performance optimization, Data Flow Execution Plans, provide an easy-to-understand high-level view of the overall execution of the Pipeline. This will be discussed in a future post.

Reference: Monitoring mapping data flows – Azure Data Factory | Microsoft Docs