Azure Data Factory is the primary task orchestration/data transformation and load (ETL) tool on the Azure cloud. The easiest way to move and transform data using Azure Data Factory is to use the Copy Activity within a Pipeline. To read more about Azure Data Factory Pipelines and Activities, please have a look at this post. Also, please check out the previous blog post for an overview of the Copy Activity.

Copy Activity is a simple activity designed to copy and move data from one location to another. To accomplish this, there are some connectors provided. Like SSIS, there are two different sets of components available:

Source: This is where the data currently resides which we would like to be copied.

Sink: This is the location/data store, where we would like the data to be loaded to. If you are familiar with SSIS Data Flow Task, this is similar to the Destination component.

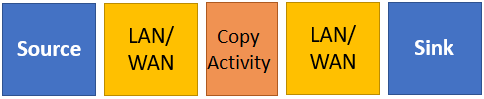

As shown in the diagram above, the Copy Activity simply copies the data from the Source and replicates the copied data to the Sink using the underlying networks (LAN/WAN) on each side.

Both Source and Sink are available as configuration settings within the Copy Activity. Both would need to be linked to the Datasets and the Datasets are usually link to a Linked Service. Linked Service and Datasets for Azure Data Factory will be covered in a future post.

There are some other important concepts related to the Copy Activity, such as Dataset Mapping which will be discussed in future posts.

For a list of supported data stores and formats for Source and Sink, please visit the MS Docs link below.

Reference: https://docs.microsoft.com/en-us/azure/data-factory/copy-activity-overview

2 thoughts on “Azure Data Factory: Source and Sink”