We have discussed about source and sink components of Azure Data Factory in the previous post. There are other components that need to be created before we can start creating Data Factory Pipelines. Some of these are Linked Services and Datasets.

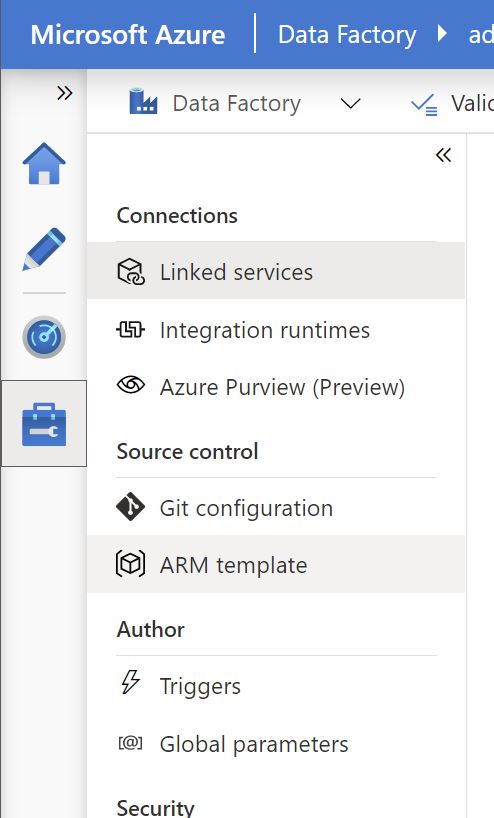

Linked Service: A linked service contains the connection details (connection string), e.g. Database server, database name, file path, URL etc. A Linked Service might include authentication information related to the connection e.g. login id, passwords, API Keys etc. in an encrypted format. Linked Services can be created under the Manage tab in UI (screenshot below) . Linked Services can be parameterized, so that one Linked Service can be reused with similar datastore types e.g. a generic Database Linked Service to access multiple Databases. This will be discussed in detail in a future post.

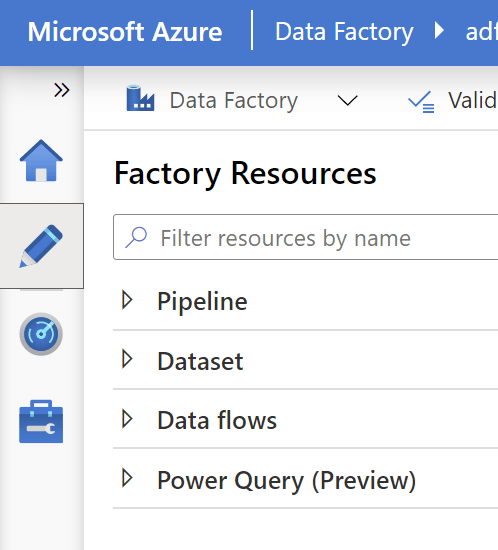

Datasets: A Dataset is a reference to a data store and provides a very specific pointer to an object within the Linked Service. E.g. If a Linked Service points to a Database instance, the dataset can refer to a specific table that we would like to use as source or sink in the Data Factory Pipeline. Datasets can be created on top of an existing Linked Service in the Author tab. (screenshot below) . Once created, datasets can then be used in the source/sink in Data Movement activity properties in a Data Factory Pipeline. One such activity is the Copy Data Activity which accepts dataset names for the Source and Sink.

Before we can perform a debug run of the pipeline, there is a validation process that validates all the underlying components within the pipeline including Linked Services and Datasets.

7 thoughts on “Azure Data Factory: Linked Services and Datasets”