With the evolution of scalable cloud technology and exponential growth of digital technologies, the preferred Data Warehouse (DW) architecture is going through a radical change.

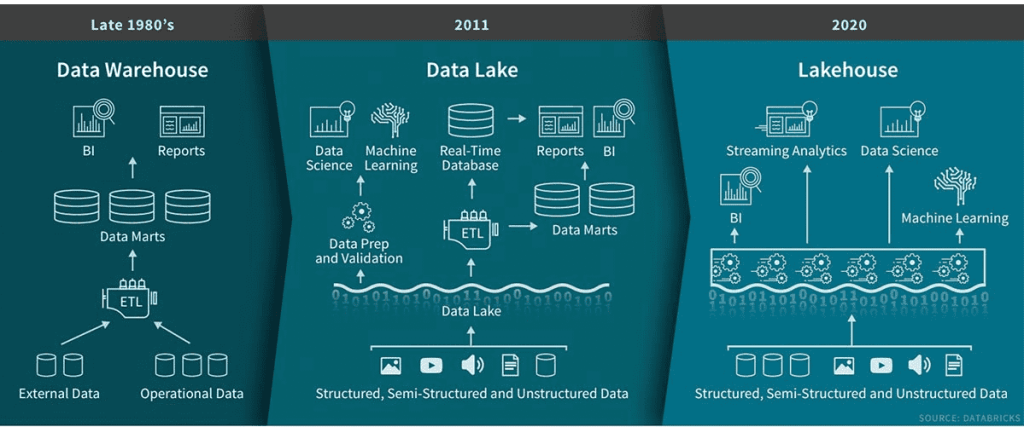

Data Lakehouse is an evolution of the DW architecture in response to the current digital environment. In order to fully appreciate how we got here, lets have a brief look at the evolution of the Data Warehouse architecture since its inception in the late 1980s.

Data Warehouse: When the DW was conceived, initially, its main purpose was to store historical subject-oriented data that can be analysed in the future. The traditional DW design is centred very simply, around Data marts or Subject Areas. The operational data (which was mostly structed files and databases) is copied and transformed into data marts which can then be used for reporting. Later, this evolved into its own field, currently known as, Business Intelligence and Reporting.

Data Lake: About a decade ago, with the advent and exponential growth of digital web and social media (also called, Web 2.0), the traditional DW approach went through a paradigm shift. This led to the evolution of Data Lakes. A Data Lake is a pool or sink for all types of data (structured, semi-structured and unstructured), which can be used for Reporting (such as, Business Intelligence tools e.g., Power BI) as well as, for advanced analytical modeling (Data Science and Machine Learning).

Data Lakehouse: In the past few years, we have observed the following:

- Emergence of social media as a major marketing channel

- Infrastructure as a Cloud (IaaS) technology going mainstream

- Proliferation of Data Analytics as the centrepiece of executive decision making

A combination of the above three factors has set the stage for the next paradigm in the DW architecture design. This new DW architecture is being called Data Lakehouse or Lakehouse, for short.

As the name suggests, a Lakehouse has the capabilities of both, a Data Warehouse, and a Data Lake. In other words, Lakehouse inherits the best of both worlds, data processing and transformation capabilities of a DW, as well as the low-cost data storage and multi-format support capabilities of a Data Lake. Another noteworthy point here is, due to the improvements and evolution of Data Analytics platforms and tools (such as Power BI, Databricks and Python), the need for a separate ETL layer has been eliminated.

We will discuss how Microsoft Azure services can be used to implement a Lakehouse, in a future post.

Reference: https://databricks.com/blog/2020/01/30/what-is-a-data-lakehouse.html

3 thoughts on “What is a (Data) Lakehouse?”